In this book, winner of the Nobel Memorial Prize in Economics, Daniel Kahneman, presents decades of research to help us understand what really goes on inside our heads. In this free version of Thinking, Fast and Slow summary, we’ll share the key ideas in the book to give an overview of how our brain works (our 2 mental systems) what are some of our key mental heuristics (intuitive biases and errors of judgement) and how to make better decisions.

Physicians have a large set of labels to diagnose and treat diseases (e.g. identify symptoms, possible causes, interventions and cures). Likewise, to understand how we make judgements and choices, we need a richer vocabulary to describe how our mind works.

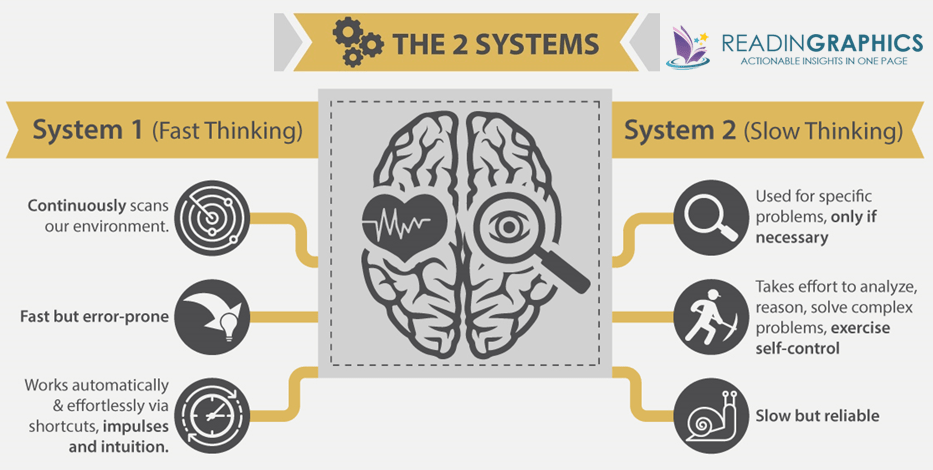

Thinking Fast and Slow: The 2 Systems

Kahneman explains how the human brain works by simplifying it into 2 systems: (fast and slow):

System 1 is Fast

It operates automatically and involuntarily; it is unconscious, can’t be stopped, and runs continuously. We apply it effortlessly and intuitively to everyday decisions, e.g. when we drive, recall our age, or interpret someone’s facial expression.

System 2 is Slow

It’s only called upon when necessary to reason, compute, analyze and solve problems. It confirms or corrects System 1 judgement, is more reliable, but takes time, effort and concentration.

It takes energy to think and exercise self-control. Our mental capacity gets depleted with use, and we’re programmed to take the path of least resistance. In the book, Kahneman elaborates on the “Law of Least Effort”, and how System 1 and System 2 work together to affect our perceptions and decisions. We need both systems, and the key is to become aware when we’re prone to mistakes, so we can avoid them when the stakes are high. Get more details from our complete 15-page summary.

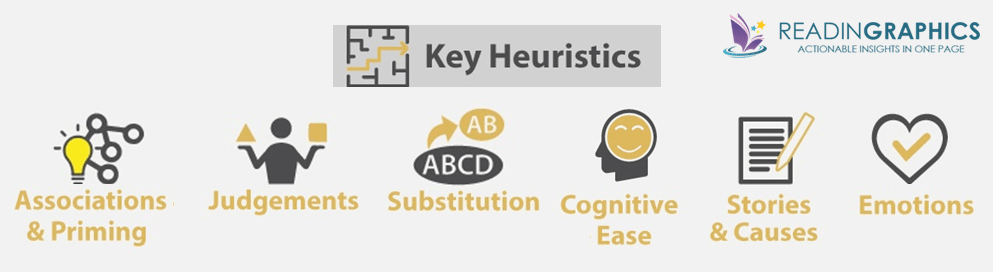

Heuristics and How System 1 Works

In order to quickly process the tons of stimuli that we take in daily, System 1 uses “heuristics” or mental shortcuts. These are fast, but often unreliable, and makes us susceptible to others’ suggestions or influence. Here’s are the key heuristics detailed in the book:

For instance, when consciously or subconsciously exposed to an idea, we’re “primed” to think about associated idea(s), memories & feelings. This is the “Associations and Priming” heuristic. If we’ve been talking about food, we’ll fill in the blank SO_P with a “U”, but if we’ve been talking about cleanliness, we’ll fill in the same blank with an “A”. People reading about the elderly unconsciously walk slower, and people who are asked to smile find jokes funnier. Each associated idea evokes even more ideas, memories, and feelings. This is called the associative mechanism.

Essentially, System 1 works using shortcuts like associations, stories and approximates, tends to confuse causality with correlation, and jumps to inaccurate conclusions. System 2 is supposed to be our inner skeptic, to evaluate and validate System 1’s impulses and suggestions. But, it’s often too overloaded or lazy to do so. This results in intuitive biases and errors in our judgement. Get an overview of the remaining heuristics from our complete book summary.

Heuristics Cause Biases & Errors

Kahneman moves on to explain many of these biases and errors.

Here are a couple of examples:

Small Sample Sizes

Most of us know that small sample sizes are not as representative as large samples. Yet, System 1 intuitively believes small sample outcomes without validation. We make decisions based on insufficient or unrepresentative data. Moreover, System 1 suppresses doubt by constructing coherent stories and attributing causality. Once a wrong conclusion is accepted as “true”, it triggers our associative mechanism, spreading related ideas through our belief systems.

Causes Over Statistics

Statistical data are facts about a case, e.g. “50% of cabs are blue”. Causal data are facts that change our view of a case, e.g. “blue cabs are involved in 80% of road accidents” – we may infer from the latter that blue cab drivers are more reckless. In the overview of key heuristics, we learn how System 1 thinks fast using categories and stereotypes, and likes causal explanations. When we’re given statistical data and causal data, we tend to focus on the causal data and neglect or even ignore the statistical data. In short, we favor stories with explanatory power, over mere data.

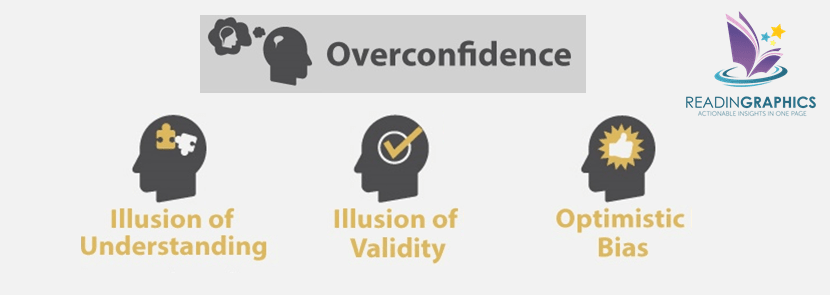

Heuristics Cause Overconfidence

We feel confident when our stories seem coherent and we are at cognitive ease. Unfortunately, confidence does not mean accuracy. Kahneman explains 3 main reasons why we tend to be overconfident in our own assessments.

Specifically, we’re overconfident in several areas:

Overconfidence in Perspectives

We think we understand the world and what’s going on, because of our Narrative Fallacy (how we create flawed stories to explain the past, which in turn shape our views of the world and our expectations of the future), and Hindsight Illusion (the tendency to forget what we used to believe, once major events or circumstances change our perspectives).

Overconfidence in Expertise

We’re also overconfident the validity of our skills, formulas and intuitions, which are unfortunately not valid in many circumstances. In the book, Kahneman explains when we can trust “expert intuition”, and when not to.

Over-Optimism

Finally, we tend to be overly optimistic, taking excessive risks. Kahneman explains the planning fallacy syndrome and how we should balance it using a more objective “outside view”.

Read more about these fallacies in our full 15-page summary.

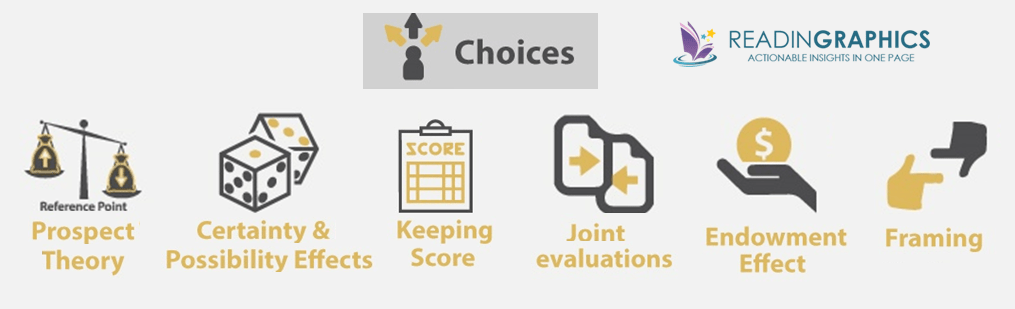

Heuristics Affect Choices

Obviously, perception and thought about inputs affect human decision-making. Using exercises and examples, the book helps us to see our own decision-making processes at work, to understand why our heuristics can result in flawed, and less-than-optimal decisions.

The Prospect Theory

In particular, the Prospect Theory (which won Kahneman the Nobel Prize in Economics) is built on 3 key ideas:

- The absolute value of money is less vital than the subjective experience that comes with changes to your level of wealth. For example, having $5,000 today is “bad” for Person A if he owned $10,000 yesterday, but it’s “good” for Person B if he only owned $1,000. The same $5,000 is valued differently because people don’t just attach value to wealth – they attach values to gains and losses.

- We experience reduced sensitivity to changes in wealth, e.g. losing $100 hurts more if you start with $200 than if you start with $1000.

- Loss aversion. We basically hate to lose money, and we weigh losses more than gains. To illustrate, look at these 2 options:

- 50% chance to win $1,000 OR get $500 for sure

=> Most people will choose the latter - 50% chance to lose $1,000 OR lose $500 for sure

=>Most people will choose the former

- 50% chance to win $1,000 OR get $500 for sure

Generally, our brain processes threats and bad news faster, people work harder to avoid losses than to attain gains, and they work harder to avoid pain than to achieve pleasure.

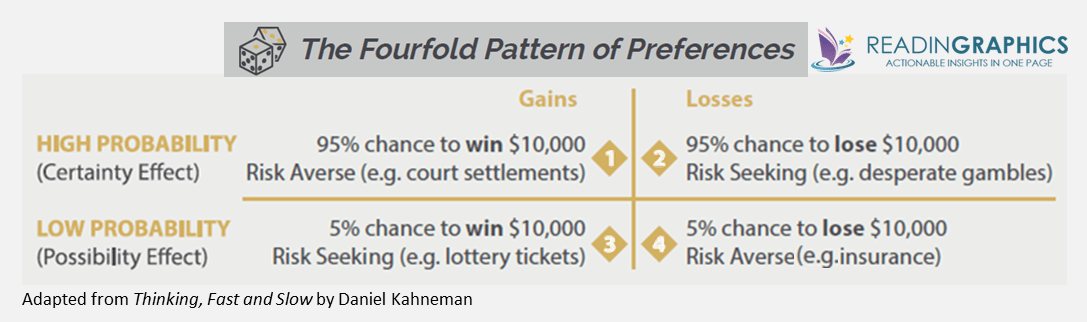

The Fourfold Pattern of Preferences

The Fourfold Pattern of Preferences also helps us to understand the Certainty Effect and Possibility Effect, and how we evaluate gains and losses.

Essentially, we tend to take irrationally high risks under some circumstances, and are irrationally risk averse under others. Read more about how heuristics affect choices in our full book summary.

Our Two Selves

In a nutshell, our heuristics influence our choices, which can be irrational, counter-intuitive and sub-optimal. It’s impossible to totally avoid biases and errors from System 1, but we can make a deliberate effort to slow down and utilize System 2 more effectively, especially when stakes are high.

In his research on happiness, Kahneman also found that we each have an “experiencing self” and a “remembering self” – our memories override our actual experiences, and we make decisions with the aim of creating better memories (not better experiences). The book explains more about the “peak-end rule”, “affecting forecasting” and how we can improve our experienced well-being.

Getting the Most from Thinking, Fast and Slow

In our summary, we’ve outlined the key heuristics and how they affect mental processing. For more examples, details, and actionable tips, do get our complete book summary bundle which includes an infographic, 15-page text summary, and a 27-minute audio summary!

Daniel Kahneman’s book is filled with pages of research, personal experience accounts, other examples, and exercises to help us experience our System 1 biases and errors at work. At the end of each chapter, Kahneman also shares examples of how you can use the new vocab in your daily conversations to identify and describe the workings of your mind and the fallacies around you. The book also includes 2 detailed Appendixes on (a) Heuristics and Biases, and Choices, Values and Frames.

If you found the ideas in this article useful, you can also purchase the book here for a deeper understanding of these powerful economic and psychological concepts!

[You can read more about The Fourfold Pattern here].About the Author of Thinking, Fast and Slow

Thinking, Fast and Slow is written by Daniel Kahneman–an Israeli-American psychologist. He was awarded the Nobel Prize in Economic Sciences in 2002 for his pioneering work that integrated psychological research and economic science. Much of this work was carried out collaboratively with Amos Tversky. Kahneman is professor emeritus of psychology and public affairs at Princeton University’s Woodrow Wilson School. Kahneman is also a founding partner of TGG Group, a business and philanthropy consulting company. In addition to the Nobel prize, was listed by The Economist in 2015 as the seventh most influential economist in the world.

Thinking, Fast and Slow Quotes

“We can be blind to the obvious, and we are also blind to our blindness.”

“In the economy of action, effort is a cost, and the acquisition of skill is driven by the balance of benefits and costs. Laziness is built deep into our nature.”

“You know far less about yourself than you feel you do.”

“People are prone to apply causal thinking inappropriately, to situations that require statistical reasoning.”

“It is the consistency of the information that matters for a good story, not its completeness.”

Click here to download Thinking, Fast and Slow book summary and infographic